Case Study

Research programme that redirected a product strategy and identified and validated a greenfield opportunity

10 min read

The brief

Lead a research programme to validate a product concept for an under utilised market, understand user workflows and unmet needs, and identify opportunities.

The problem

- Moody's identified a high-value market but had no validated understanding of users, workflows, or whether their product concept would work

- The business was about to invest based on untested assumptions

Key methods

- Jobs-to-be-Done

- Stakeholder alignment workshops (x2)

- Exploratory interviews (10 × 1hr)

- Deep-dive interviews (10 × 1hr)

- Concept testing (10 × 1hr)

- Dovetail thematic coding and analysis

- Wireframing for concept validation

My role

Research lead and sole designer. Designed the research programme, managed stakeholders and the recruitment agency, wrote all interview scripts, facilitated all 30 interviews, completed approximately 85% of thematic coding and analysis, and presented findings to stakeholders and the wider company.

Key tools

Delivered

- 1,600+ findings across 30 interviews

- 1 greenfield opportunity identified, validated, and championed internally

- 6 innovation areas with validated concept directions

- Existing product strategy pivoted based on evidence

The problem

The business was about to invest in a product users wouldn't adopt

Portfolio managers work under significant time pressure and resist adding new tools. Most consolidate into as few platforms as possible, defaulting to Bloomberg even when it falls short, because switching costs are high.

Moody's planned to reach these users with a product displaying Moody's data in the context of their portfolio. The assumption: seeing personalised data would demonstrate its value and drive purchase.

Three rounds of interviews showed this wouldn't work:

- Portfolio managers wouldn't log in for data they don't currently use

- Data checked quarterly doesn't drive daily adoption

- Without daily adoption, there is no path to purchase

The go-to-market assumption was broken at the foundation.

Research approach

A three-stage research programme designed to build understanding before testing assumptions in a new market

I used the Jobs-to-be-Done framework and Nielsen Norman's guidance on exploratory research to structure a programme that built understanding before testing assumptions. I wrote all scripts, facilitated all 30 interviews, and completed approximately 85% of thematic coding and analysis. My manager completed the remainder using the same method.

- Stage 1: Exploratory interviews (10 x 1hr) — Understand how portfolio managers actually work: tools used, where time is spent, where information gets lost. No product assumptions.

- Stage 2: Deep-dive interviews (10 x 1hr) — Investigate the problem areas that emerged in Stage 1. Begin testing whether the business's product concept aligned with real workflow needs.

- Stage 3: Concept testing (10 x 1hr) — Present potential product directions using clickable Figma prototypes. Validate whether directions addressed real needs.

Challenges navigated

Five blockers between project start and the first interview: all resolved

Stakeholder misalignment — research start blocked

Hover to see responseRan 2 alignment workshops; used the delay for internal expert interviews

Screener not filtering correctly

Hover to see responseRebuilt with agency using domain expert input

Niche users — field study impractical

Hover to see responseRestructured to 30 focused 1-hour interviews

Statistical significance unachievable

Hover to see responseRemoved outcomes survey from the plan

Limited UX team availability

Hover to see responseRedirected team to competitive design research

Analysis

Thematic coding across 30 interviews produced 1,600+ findings. Here is how I organised them for efficient analysis

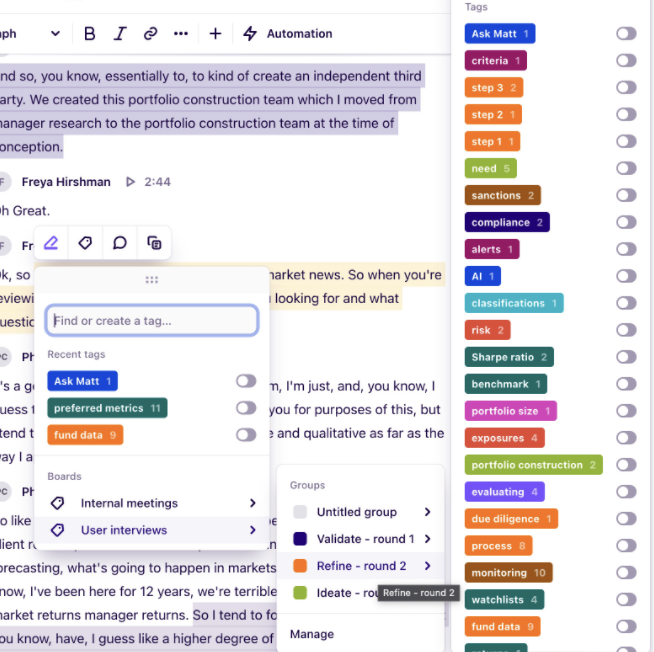

I tagged findings in Dovetail as analysis progressed, building out tags as new themes emerged across interviews rather than defining all categories upfront. Specific tags and comments were added in context, so that when grouping findings by theme at the end, the wider significance of each insight was already captured. I organised all findings by the workflow area being investigated, then grouped by emerging theme within each area.

This mattered. Reading across all participants' experience of the same workflow moment made patterns visible in a way that sorting by interview would not. Consistent patterns across participants carried weight. Single-participant outliers were noted but not treated as signals.

My manager completed 15% of the analysis using the same method, taking responsibility for specific workflow areas. Having someone with deep familiarity with the research content made consolidation genuinely collaborative.

The pivot

Research gave the business evidence to stop building in the wrong direction

Portfolio managers wouldn't log in regularly for data they didn't use and didn't believe they needed. No UX improvement could fix that.

We couldn't present the finding bluntly. The product had been in development throughout the research programme. The reaction in the room was resistance: not bad faith, but fear of having nothing to build. We framed it carefully. The concept had real value as a sales tool for Moody's data, and research proved users would respond to it in that context. What it couldn't sustain was a standalone subscription model.

The resistance eased because we had a strong alternative to offer.

The greenfield opportunity

Research identified a daily workflow gap with no finance-focused solution

The consistent thread wasn't what portfolio managers were missing from financial data products. It was the manual work they did every day connecting information across tools that don't communicate: Bloomberg, internal systems, spreadsheets, email. No product served as the connective layer.

I kept surfacing findings around this pattern but struggled to make the full picture visible until I found Obsidian: a knowledge management tool built around connecting fragmented information sources. Using it as an analogy, I could show concretely how the same principle applied to a portfolio manager's fragmented daily workflow. Once that frame landed, the opportunity expanded quickly across user roles, Moody's data products, and the API strategy already in development.

The opportunity: a tool at the centre of a portfolio manager's daily workflow, surfacing Moody's content at the exact moment it was relevant. It addressed three problems at once:

- Daily adoption, by embedding in existing workflows rather than requiring new ones

- A natural route to demonstrating Moody's data value in context

- Moody's company-wide goal of a single access point across all products

Design process

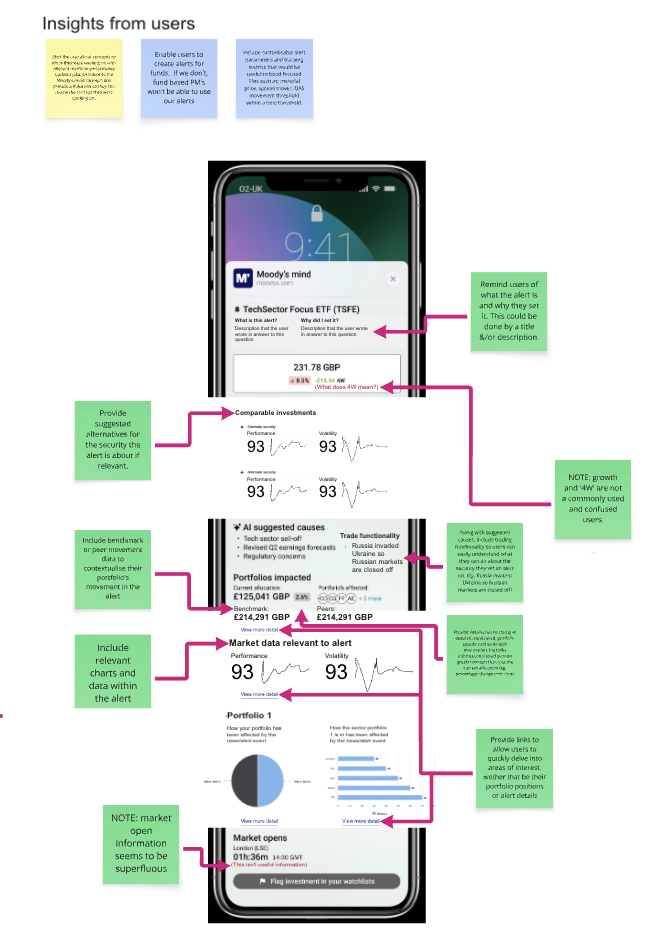

Most final concepts are under NDA. One non-proprietary concept illustrates my process

Step 1

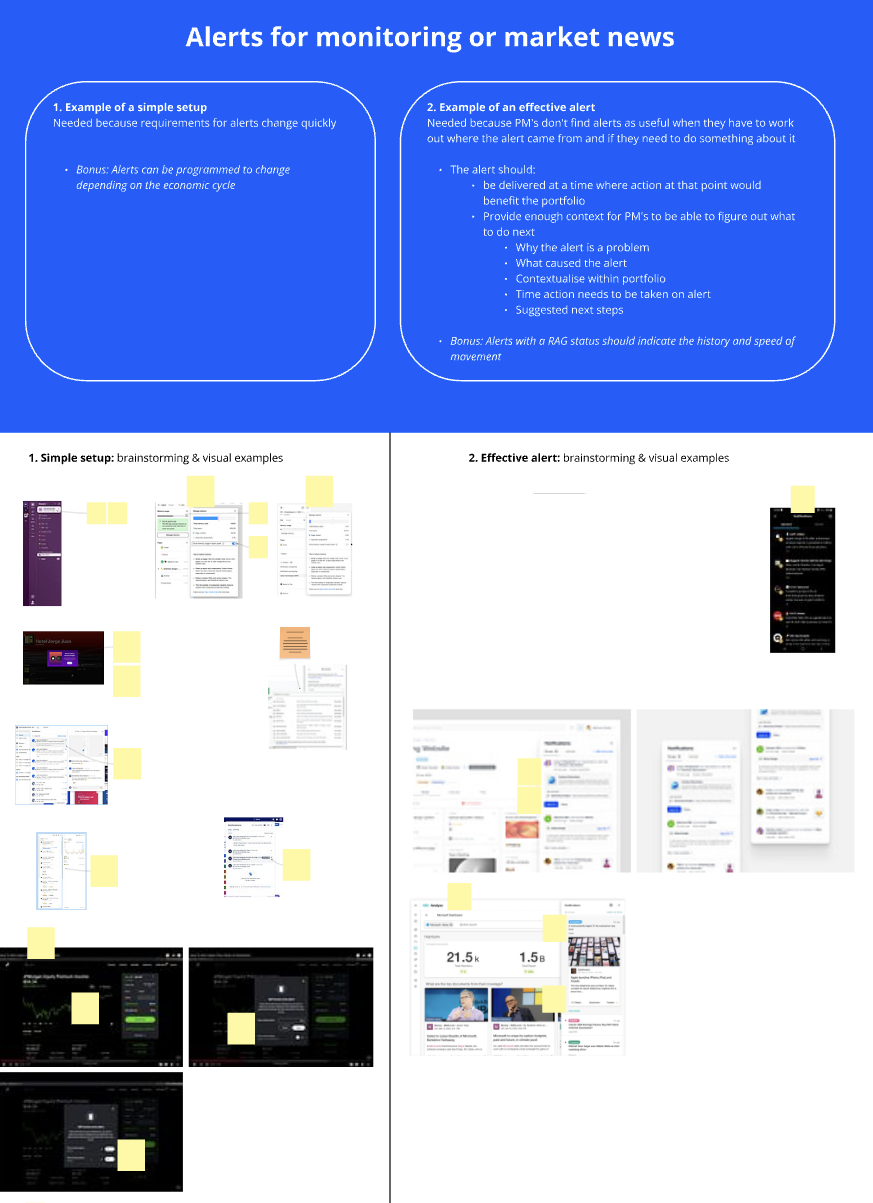

Desk research workshop defined the problem and identified analogous solutions

Step 2

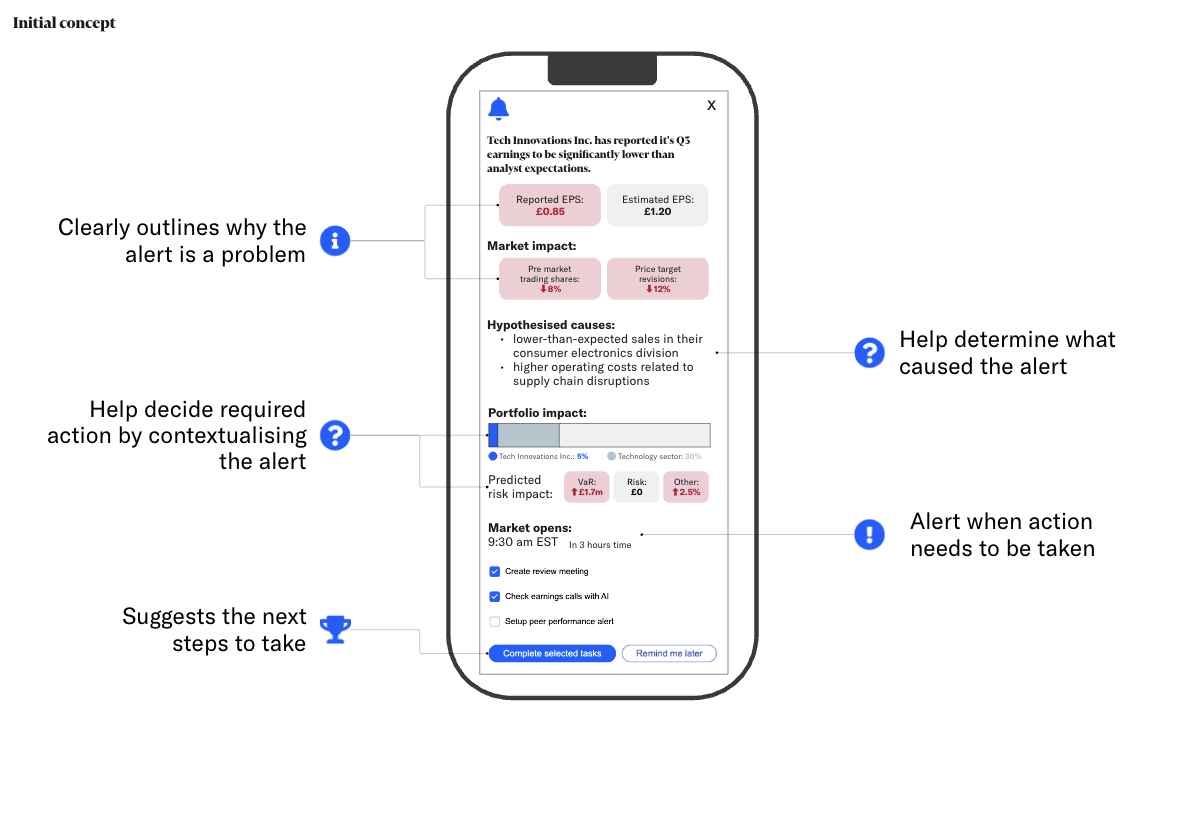

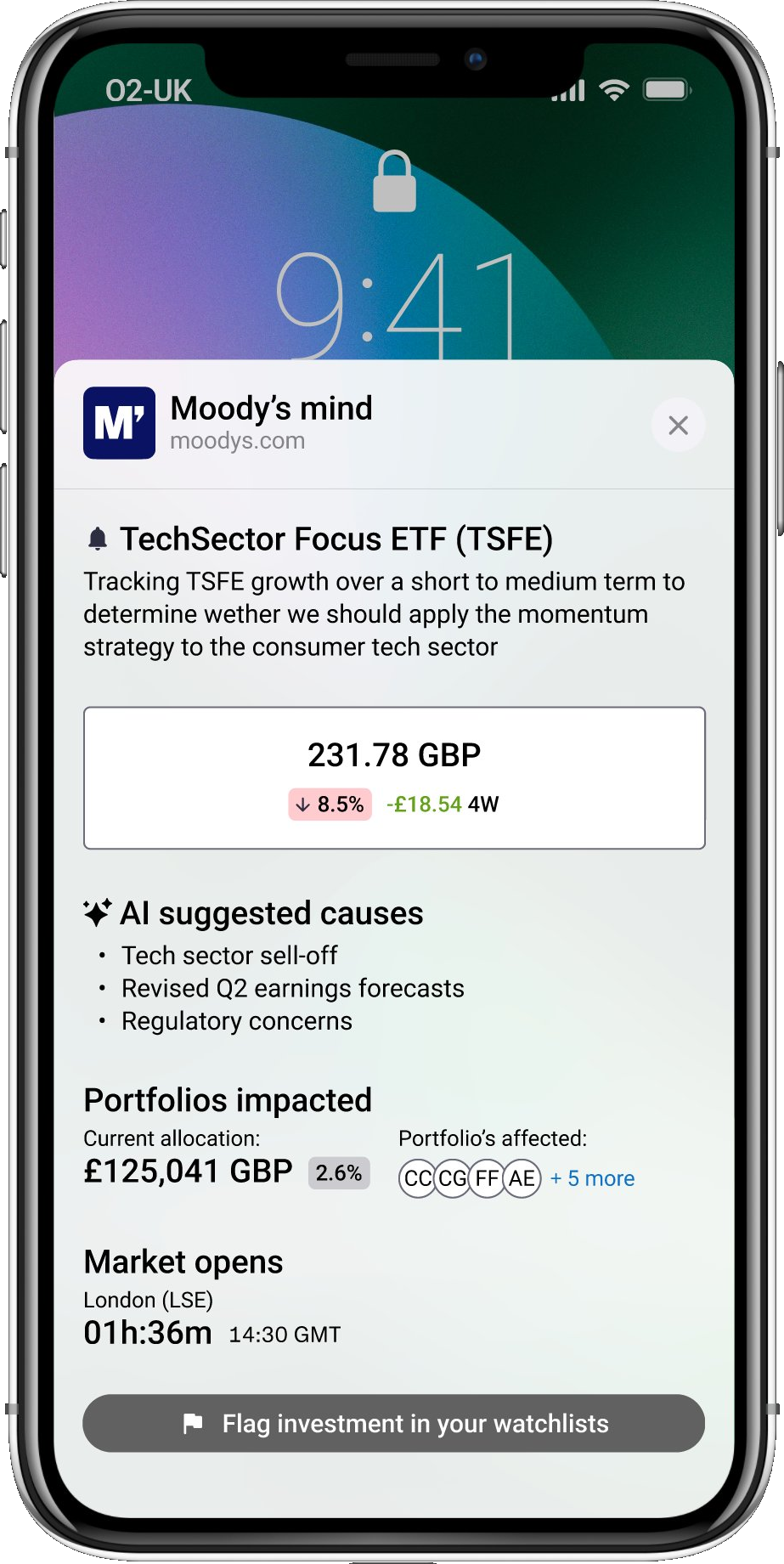

Annotated wireframe connected every design element to a research finding

Step 3

Concept tested using a clickable Figma prototype

Step 4

Findings mapped back to the design to support stakeholder decisions

What testing this concept revealed

- Portfolio managers want fewer, better alerts: not more notifications

- Multi-signal alerts add context that single-threshold triggers require additional research to provide

- Portfolio managers would accept AI if it gave them avenues for investigation rather than solutions: expediting their process while keeping them in control

Results

Research redirected a product strategy and validated a greenfield opportunity

1,600+ findings synthesised across 30 interviews

6 innovation areas identified, each with a validated concept direction

1 greenfield opportunity discovered, validated, and championed internally

Existing product direction pivoted based on research evidence

The original product concept was repurposed as a sales tool for Moody's data: the application research showed it was best suited for

Next steps

- 3-month pilot with 3–5 target organisations

- Survey across 50+ users to prioritise features

- Longitudinal diary study to verify daily workflow integration